Though more than a thousand years old, unique in its written form and sentence structure, and used every day by more than a billion people, the Chinese language has rarely been used to understand the cognitive and neural mechanisms in speech perception. Recently, NYU Shanghai Assistant Professor of Neural and Cognitive Sciences Tian Xing sought to remedy this gap, by studying how the human brain reacts to and processes ancient Chinese poetry.

Classical Chinese poetry famously adheres to rigid structures regarding rhythm, rhyme, and format. Tian and collaborators from Max Planck Institute for Empirical Aesthetics, Google, and East China Normal University decided to make use of the unique structure of ancient Chinese poems to uncover how the structure of the content impacts people’s perception of speech. The findings were published in the leading science journal, Current Biology.

Chinese ink painting of the cerebral cortex, overlaid with the illustration and calligraphy of Classical Chinese Jueju‘Jiang Xue’ (River and Snow). Calligraphy by Gong Chi; Painting by Li Fuan; Image edited by Sun Jiaqiu.

“Previous research has already studied how people perceive speech based on various cues, such as meaning, syntax, and so on. We are among the first to focus on the effect of the content structure on speech perception,” said Tian. “In addition, the use of poems to conduct scientific experimentation is also rare in neuroscience research.” Tian believes that findings from this research would help uncover new ways of thinking about neuroscience, education, the arts, and culture.

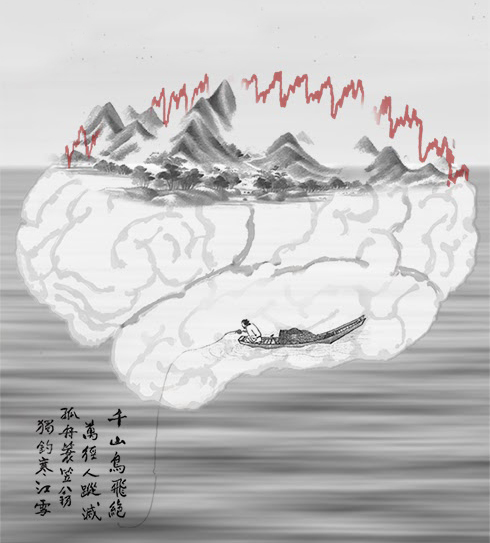

The researchers decided to use Jueju, or Chinese quatrains made up of five or seven characters in each line, with an alternating rhyme scheme in the second and fourth lines. They are one of the most representative examples of Classical Chinese poetry, and form a major part of the Chinese public school language curriculum, so the researchers were sure they could find plenty of participants who would be familiar with the structure of Jueju.

Example of Jueju.

When selecting the poems to show participants, the researchers faced a challenge. They did not want to show participants any Jueju that they were familiar with, because that familiarity might affect how their brains processed the verses. Researchers found a novel way to avoid “contaminating” the pool of content they used by reaching out to Ma Min, an expert from Google, who specializes in natural language processing and is well-versed in ancient Chinese poetry.

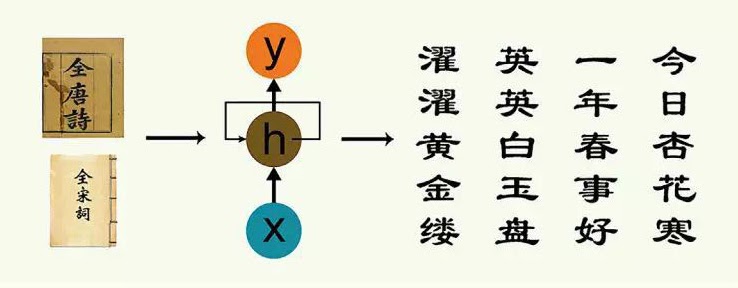

Ma built an artificial intelligence program that “learned” how to generate brand new Jueju by analyzing the structure of thousands of existing Classical Chinese poems. These AI-generated poems formed the bulk of their test material, to which they also added 30 obscure Jueju. The poems were read out loud to participants using artificial speech synthesis to remove any emotion, pauses, or rhythm. In other words, a quatrain with five characters in each line would be read as one continuous stream of 20 characters. As a result, participants had to rely on their existing familiarity with the structure of Jueju to process what they were hearing.

An artificially generated Jueju written in Classical Chinese style.

The study involved 13 native speakers of Mandarin Chinese who were formally educated in China, this meant that every participant would have a grounding in the structure of Jueju. Each participant listened to 180 poems (150 artificial and 30 real), and each poem was played twice. While listening, their brains were monitored by a magnetoencephalography scanner, which mapped their brain activity by recording the magnetic fields produced by electrical currents occurring naturally in the brain.

Magnetoencephalography scanner (image source: the United States Department of Health and Human Services).

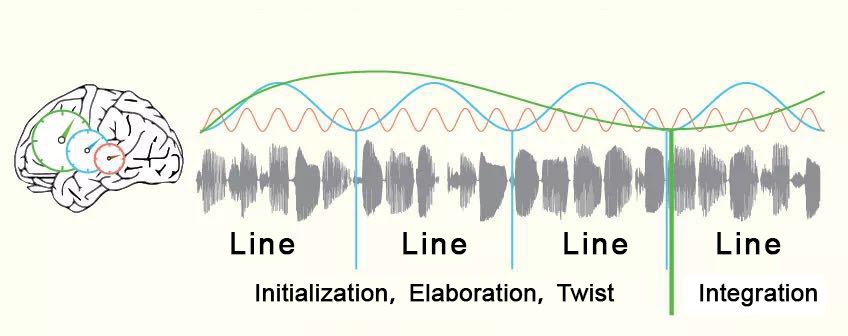

Tian and his team told participants that they would be hearing a Jueju read out loud, and played the recording for them. Afterwards, they discovered a brain rhythm (shown as a blue wave in the image below) that oscillated with the speed of each line of the poems. This indicated to the researchers that as long as the participants knew they were hearing a Jueju, their brains would automatically “parse” content, breaking it into four lines with five characters in each line.

Researchers discovered an additional brain rhythm (shown as a green wave in the image below) that oscillated with the speed of the first three lines. Tians’ team hypothesized that this rhythm might have something to do with another structural element of Jueju wherein the four lines of a quatrain acts as a Qi (initialization), Cheng (elaboration), Zhuan (continuum/twist), and He (integration), respectively. As the fourth line acts as a conclusion to the previous three lines, the researchers thought it was very likely that the dip in the brain rhythm could be the listener making a separation of the final line from the preceding three. Later, the research team used a computational model to create a simulation based on their hypothesis, and the results indicated that their hypothesis was likely to hold.

Brain rhythms superimposed on top of sound waves. Researchers discovered three types of brain rhythms: The red line corresponds to each word of the poem, the blue line corresponds to each line, and researchers believe that the green line corresponds to participants’ learned expectations of the overarching structure of Jueju.

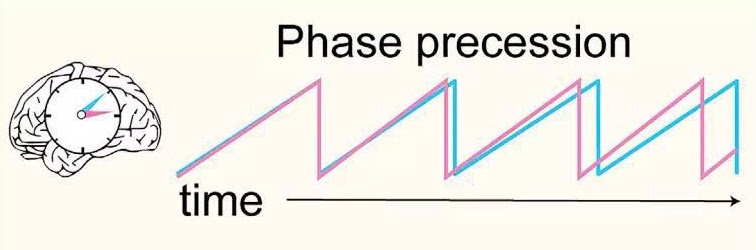

Additionally, the team found that when participants listened to a poem for the second time, their brain rhythm would advance faster than the first time due to their familiarity with the material. This phenomenon was first discovered in the field of neuroscience dedicated to spatial navigation, wherein experiments involving mice running through mazes found that the mice’s brain rhythm advanced faster in subsequent tries. Tian‘s study is the first time researchers have observed this phenomenon of faster advancement of brain rhythms as related to processing sequential signals in speech perception.

Brain rhythms advanced faster when participants listened to the poem for the second time (pink line) compared to the first time (blue line).

This study not only provides new insights for neuroscience research, it also has application in education and art. Currently, language education mainly involves vocabulary, listening, reading, and grammar practice, but the results of this study indicate that the study of structure may aid language acquisition.

Tian says that scientists studying the neural mechanisms of art appreciation can also glean new ideas from the study. “Eric R. Kandel, one of the founding fathers of neuroscience once speculated that ‘art happens when predictable structure encounters unforeseen content,’ and it just so happens that our findings fit with his assumption,” said Tian. “Because we have discovered that people rely on basic structures when interpreting the poetry of the ancients. Perhaps artists will find these ideas useful in their practice.”

“We can make an even bolder hypothesis and ask the question: Is there a neurological basis for cultural differences? Chinese people have been creating and appreciating Jueju for thousands of years, being immersed in this specific cultural form,” says Tian. “Could it be that our brains are more suited to processing information with specific formats and structures? This is a cultural question worth exploring more deeply.”

In order to continue exploring in this vein, Tian plans to include other art forms in his future research. “We can use the same paradigm and apply it in an interdisciplinary way to behavioral science, brain science, education, art, and culture,” says Tian. “By including different fields of study and encompassing diverse perspectives, we can open more doors for the study of speech perception and cognitive neuroscience.”

Tian is the primary corresponding author of this paper. Teng Xiangbin and Stefan Blohm from Max Planck Institute for Empirical Aesthetics, Ma Min from Google, Professor Cai Qing from East China Normal University, and Yang Jinbiao, the research assistant at NYU Shanghai during the research have contributed to the research.